FAQ#

This page references frequently asked questions regarding the Ansys SimAI Pro application models.

Training data and model performance#

Are the Ansys SimAI Pro application models translation invariant?

Models remain largely unaffected by data translation during both training and prediction phases. Adjusting the absolute position of your geometry will not improve your model performance. However, it can introduce border effects if the geometry is too close to the limits of the Domain of analysis. In general, when there is some variance in the absolute position of your data, use the relative definition of the Domain of analysis to ensure a proper model training.

Are the Ansys SimAI Pro application models rotation invariant?

Models are sensitive to data rotation. Importing the same geometry with different orientations will produce different results and, especially, make your model more effective at generalizing if rotation is an important aspect to study.

Is it possible to anticipate the model’s performance during its construction?

It is impossible to interpret or monitor a model’s performance during its construction. The two effective approaches to achieving satisfactory performance:

Build the model progressively. Begin with a small dataset and a short build duration. As you gain confidence in your AI model, gradually increase the number of training data points and extend the build duration, ideally modifying only a few parameters at a time.

Use the Downsampling Option (BETA). Follow the section Downsampling (BETA feature).

How does the Ansys SimAI Pro application manage to build models with so little training data? (when compared with recommended minimum number of data points for other Neural Network approaches)

By its 3D Deep Learning approach, the model is able to extract more information from the 3D application than what another model can extract from scalar inputs/outputs. There is more information in a 3D field than in a scalar. With that said, the Ansys SimAI Pro application still requires some data, just as any Deep Learning method, and substantially more data when training on scalar predictions.

For example, consider two use cases that may require more data:

In very specific contexts like statistical or risk assessment approaches, typically kurtosis prediction, there is no interest in learning the full 3D field as only a few nodes of the full field are important. Thus, the 3D problem is naturally shrinking into a “scalar” problem for input. And as a consequence, for this kind of problem, the Ansys SimAI Pro application requires more data, as only few important information can be derived from the 3D field.

When creating a constant field for scalar prediction, since a constant field does not contain a lot of information to generalize on, the requirement in data increases.

Moreover, the SimAI Pro model is based on continuity. It uses a continuous function to represent a smooth and predictable relationship between inputs and outputs over a specified domain.

The function is then sampled across the domain to discretize the data using collocation points, enabling for visualization and computation.

Postprocessing functions then use these sampled data and collocation points to generate outputs/ values that can be visualized (surface.vtp) or integrated across surfaces to derive summary metrics (Global Coefficients). These postprocessing functions allow detailed insights into the model’s predictions to assess and validate its performance.

Speed vs Performance#

How does prediction time correlate with the number of cells in my geometry?

The prediction time is approximately linearly correlated with the number of cells.

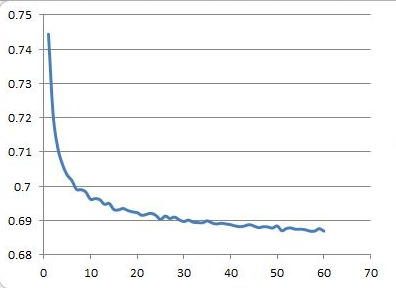

Why is there sometimes marginal improvement despite me choosing a longer build duration?

The learning (training) process of a neural network is an iterative process in which the calculations are carried out forwards and backwards through each layer in the network until the model is converged. When you choose a longer build duration, you allow the model to train for a higher number of epochs/ iterations, but there is no significant improvement if you are already in the asymptotic state. This mechanism is similar to how solvers do not improve convergence when they reach a given number of iterations. Once you launched a build, the training process cannot be stopped (when convergence is reached) and it is not possible to detect any asymptotic state during the process .

Figure 5. Example of the convergence of a loss function (y-axis) over iterations (x-axis)

Can training stage be expedited? Is there any option to leverage from more GPU/ CPU for training?

There is no option to speed up a training by leveraging more GPU/ CPU. In specific conditions, it is possible to downsample the training dataset. For more information see Downsampling (BETA feature)